What the Grind Was For

One morning, three AI agents, and a year's worth of thinking compressed into a few hours. The result was exhilarating — and it surfaced a question I can't answer.

Nino Chavez

Product Architect at commerce.com

This morning I wrote a blog post, drafted a whitepaper, and arrived at an argument I wasn’t expecting to make — all before lunch.

That’s not a flex. That’s the setup for the problem.

How It Happened

It started with a conversation in Gemini about the calculator analogy — whether using an LLM to write is “cheating” in the same way using a calculator on a math test might be. That conversation surfaced the Hitchhiker’s Guide parallel: Deep Thought computing for 7.5 million years and returning “42.” Output without derivation. An answer nobody could evaluate because nobody saw the work.

That thread became the blog post — a personal reflection on what’s mine and what’s the tool’s when I write with AI. The math-class memory, the calculator shift, the unresolved question of whether I’m an architect or just an operator with good taste in prompts.

Separately, I’d run deep research on the epistemology of AI-assisted cognition — the Extended Mind thesis, cognitive offloading, Chain of Thought faithfulness, the whole academic landscape around what happens when you hand stochastic tools to knowledge workers. That research didn’t belong in the blog post. Too formal, too dense, too many comparison tables. It became the whitepaper.

Different agents. Different conversations. Different registers entirely.

That’s when I pulled up the archive.

The Pattern I Didn’t See Coming

I pulled up the whitepaper’s core structural argument — that the 1970s calculator debate was resolved through sequencing. The National Council of Teachers of Mathematics said: master the fundamentals first, then introduce the tool. The calculator was safe because it was an accelerator layered on top of existing competence.

And I realized I’d been writing about the failure of that sequencing for a year.

The Cognitive Foundry — the consulting industry’s apprenticeship model was the sequencing mechanism. Juniors did the grind. Through the grind, they developed judgment. AI eliminated the grind and the training substrate simultaneously.

Who Checks the Foundation? — “Now AI gives everyone the tools of the master. But not the instincts.” The apprentice-journeyman-master progression, broken because the apprenticeship phase got automated away.

The Agentic Student — “Senior Juniors.” Students capable of strategic oversight but missing foundational skills. The tool arrived before the fundamentals.

The Calculator Effect — where I first noticed the atrophy in myself. “It’s not that I can’t do the math. It’s that I stopped trying.”

These posts were written months apart. Different contexts, different prompts, different arguments. But this morning, compressed into a few hours of cross-referencing, the through-line became obvious: every one of them is about what happens when the sequencing breaks.

The Compression Problem

Here’s where this morning gets uncomfortable.

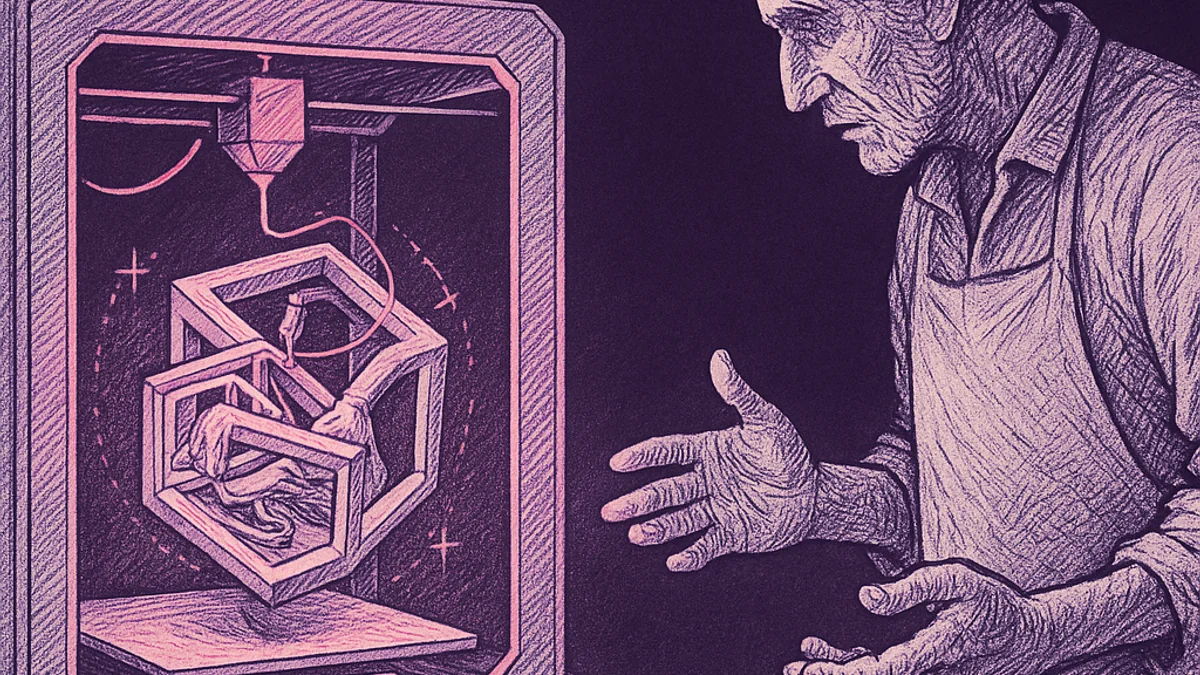

The whitepaper introduces a concept called the “Amplified Mind” — the idea that human-LLM collaboration is qualitatively different from using a calculator. It’s not passive storage and retrieval. It’s co-production. The human provides direction and judgment; the AI provides generative capacity; the product emerges from the interaction.

This morning was a textbook case of the amplified mind at work. I moved across three agents, multiple conversations, different registers — personal reflection, formal analysis, retrospective synthesis — and arrived at a connection that would have taken me weeks to see if I’d been working through it linearly, post by post, without the tools.

The compression was real. The synthesis was real. The pattern recognition — seeing the sequencing problem across a year’s worth of independent posts — that was mine. The AI didn’t draw that line. I did.

But.

I also have twenty-five years of building systems, shipping products, navigating organizational complexity. I have the domain expertise to evaluate what these agents produce. I know when a generated paragraph sounds right but isn’t. I know when a framework is structurally elegant but practically useless. That judgment didn’t come from prompting. It came from the grind.

The grind I’ve been writing about losing.

The Question That Won’t Resolve

The whitepaper prescribes epistemic virtues — vigilance, skepticism, patience, intellectual autonomy. The argument is that if you deploy these virtues actively, using an LLM isn’t cheating. You’re exercising the cognitive discipline to manage generative uncertainty.

But those virtues assume something. They assume you already have the domain expertise to be vigilant about. They assume you’ve done enough of the work by hand to know when the tool’s output smells wrong.

What happens to the person who never did the slow version?

I can compress a year of thinking into a morning because I spent a year doing the thinking first. The posts existed. The arguments had been tested, revised, pressure-tested, sometimes red-teamed. The grind was already done.

A junior developer — the one I wrote about in The Entry-Level Developer, the one who never had to grind through CRUD apps and manual debugging — gets the same tools I do. The same compression. The same synthesis velocity. But without the substrate. Without the judgment that comes from years of feeling the friction.

The amplified mind requires a mind worth amplifying. And the grind is how you build one.

I wrote in the Cognitive Foundry about “Surface Competence” — the appearance of expertise without the foundation to defend it under pressure. This morning’s work wasn’t surface competence. I can defend every argument in that whitepaper. I can point to the lived experience behind every connection.

But could someone who skipped the grind produce something that looks identical?

Probably. That’s what scares me.

What This Morning Actually Was

Three conversations. Three agents. One morning. And at the end of it, a synthesis that connects a personal blog post about math class to a formal epistemological framework to a year-long thread about the death of apprenticeship.

If I’m being honest about what happened: I experienced the amplified mind doing exactly what the whitepaper describes. Generative coupling — my direction and judgment meeting the AI’s capacity, producing something neither of us could have produced alone. Navigational agency — steering across possibility spaces, knowing which paths to take and which to discard.

And the whole time, underneath the exhilaration of that compression, a quieter signal: this only works because I already did the math by hand.

The sequencing problem isn’t theoretical for me. I lived through the sequenced version. Twenty-five years of grind, then the tools. The calculator came after the calculus.

What I can’t figure out — what none of the posts I’ve written have resolved, and what this morning made sharper instead of clearer — is what the sequencing looks like for someone starting now.

Not every problem has an answer. Some just get more precisely stated.

I think that’s where I am — a better version of the question than I had this morning. Which, given that I wrote a whitepaper arguing for the value of problem formulation over problem solving, might be exactly the point.

Addendum: The Verb Problem

Read that last sentence again. “I wrote a whitepaper.”

Did I?

I prompted three AI agents across multiple conversations. I directed the research. I shaped the arguments. I caught the through-line across a year of posts. But I didn’t write the whitepaper. Not the way that word used to mean something.

This entire post says “I wrote” and “I arrived at” and “I pulled up the archive.” And every one of those verbs is doing work I should probably interrogate. What I actually did was prompt. I described what I wanted. I evaluated what came back. I steered. I rejected. I refined. I recognized when the output matched something true and when it didn’t.

Is that writing?

The post above argues that the amplified mind is real — that the synthesis was mine, the pattern recognition was mine, the judgment was mine. I believe that. But the language I used to describe it tells a different story. “I wrote” is a claim of authorship that papers over the mechanics. It’s comfortable. It’s also not quite honest.

Here’s where it gets recursive. The discomfort I’m feeling right now — about the gap between “I wrote” and “I prompted” — is itself a product of the grind. I know what writing feels like. The friction of finding the right word. The slow assembly of an argument sentence by sentence. The version that gets deleted at midnight. I have twenty-five years of that. So when I say “I wrote” and something doesn’t sit right, it’s because I have a calibration for the verb that the tool didn’t earn.

Someone who’s never done the slow version? “I wrote” might feel perfectly accurate to them. They wouldn’t feel the gap because they never experienced what the word used to cost.

I don’t have a clean resolution here. I directed something into existence this morning. The judgment was mine. The output wouldn’t exist without me. But the verb “wrote” is doing more heavy lifting than it deserves, and I’d rather flag that than pretend I didn’t notice.